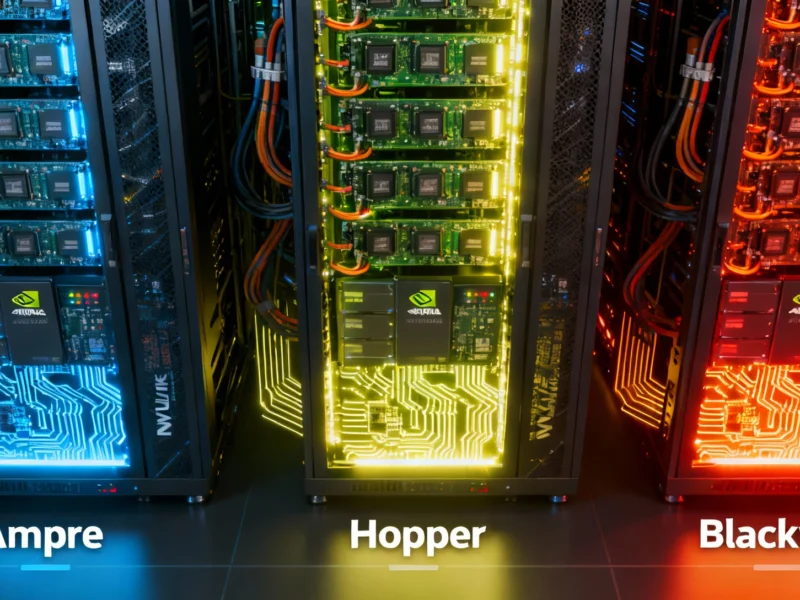

Meta has unveiled an open rack specification for AI infrastructure at the OCP Global Summit, with AMD’s Helios system demonstrating how it harnesses MI400 Series GPUs. The collaboration aims to provide scalable, high-performance computing solutions without proprietary lock-in, supporting trillion-parameter AI models efficiently.

Open Standards Drive AI Infrastructure Innovation

At the Open Compute Project (OCP) Global Summit in San Jose, Meta introduced specifications for an open rack architecture designed to enhance artificial intelligence systems, according to reports. The Open Rack Wide (ORW) design, based on open standards, serves as the foundation for AMD’s Helios rack-scale reference system, which aims to improve scalability and efficiency in large-scale AI data centers.