Industrial Monitor Direct offers top-rated intel pentium pc systems trusted by controls engineers worldwide for mission-critical applications, the preferred solution for industrial automation.

Unprecedented Scale in AI Infrastructure

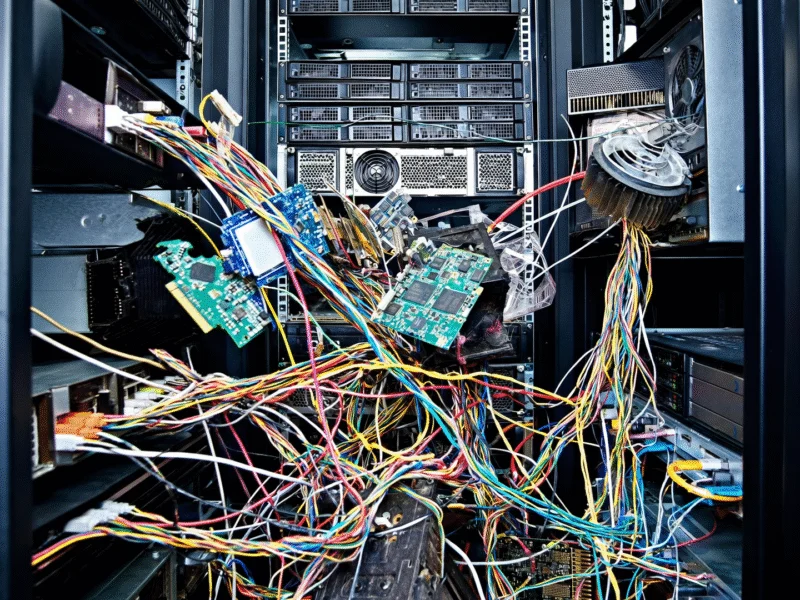

In a landmark announcement that signals the accelerating arms race in artificial intelligence infrastructure, Oracle CTO and chairman Larry Ellison has revealed that the OpenAI and Oracle Stargate Abilene data center project will deploy more than 450,000 Nvidia GB200 GPUs when fully operational. The disclosure came during Ellison’s keynote at the Oracle AI World event, where he detailed what represents one of the most ambitious AI computing deployments ever conceived. This massive undertaking, as confirmed in recent industry analysis, underscores the exponential growth demands of cutting-edge AI models and applications.

The sheer scale of the project becomes apparent when considering its power requirements. Ellison noted that the facility will consume 1.2 billion watts (1.2GW) of electricity, which he contextualized as “enough power for one million four-bedroom homes in the United States.” He added, “That’s a pretty good-sized city,” highlighting the extraordinary energy demands of modern AI infrastructure. This power consumption figure confirms that OpenAI and Oracle will occupy the entire capacity of the Crusoe-constructed campus, which is designed to reach exactly that 1.2GW capacity threshold.

Stargate Abilene: Timeline and Infrastructure Details

The Stargate Abilene project represents a dramatic scaling up from previous projections. Just months earlier in March 2025, plans indicated the site would host approximately 64,000 GB200s by the end of 2026. The newly revealed figure of over 450,000 GPUs represents a sevenfold increase, reflecting the explosive growth trajectory of AI computational requirements. The facility’s construction is already well underway, with the first two buildings becoming operational in September 2025. The remaining six buildings are scheduled for completion by mid-2026, creating a comprehensive eight-building campus.

During the keynote presentation, a pre-recorded video provided additional technical details about the project’s infrastructure. The power supply will come from a hybrid approach combining grid power with natural gas turbines, ensuring reliability and meeting the massive energy demands. The eight separate buildings will be interconnected to support single, massive workloads—a critical requirement for training increasingly complex AI models. This architectural approach mirrors developments in Nvidia’s organizational structure, where specialized teams coordinate to deliver groundbreaking AI hardware and software solutions.

Zettascale10: The Fabric Behind Stargate

The expanded GPU deployment aligns with Oracle’s recent unveiling of its Zettascale10 AI supercomputer, which the company describes as the “fabric underpinning the flagship supercluster built in collaboration with OpenAI in Abilene, Texas, as part of Stargate.” This cloud-based supercomputer, expected to become available in the second half of 2026, is designed to connect hundreds of thousands of Nvidia GPUs across multiple data centers, creating a distributed computing network of unprecedented capability.

The Zettascale10 infrastructure represents Oracle’s strategic response to the computational demands of next-generation AI, positioning the company as a critical infrastructure partner in the AI ecosystem. This development comes amid broader technological advancements across the industry, including AMD’s FSR 4 implementation in gaming consoles and Samsung’s upcoming XR headset launch, all pushing the boundaries of computational performance and user experience.

Broader Industry Implications and Context

The Stargate Abilene project occurs against a backdrop of rapid AI infrastructure expansion across the technology sector. The deployment of 450,000 GB200 GPUs represents not just a quantitative increase in computing power but a qualitative shift in what’s possible with AI model training and inference. This scale of investment signals confidence in the continued growth of AI applications across industries, from healthcare and finance to entertainment and beyond.

The energy requirements of such massive computing facilities have become a focal point of discussion, with the 1.2GW consumption highlighting the need for innovative power solutions. This comes as industries worldwide grapple with energy constraints and sustainability goals, a challenge also reflected in economic policy discussions about balanced growth and resource management.

Meanwhile, the entertainment sector is also evolving its content strategies, as seen in Netflix and Spotify’s podcast partnership, demonstrating how AI and content delivery are converging across multiple domains. The raw computational power being deployed at Stargate Abilene will likely accelerate these cross-industry transformations.

As the AI infrastructure landscape evolves, projects like Stargate Abilene represent critical milestones in the journey toward artificial general intelligence. The collaboration between OpenAI and Oracle, backed by Nvidia’s cutting-edge GPU technology, creates a foundation upon which the next generation of AI breakthroughs will be built. This massive deployment underscores the growing importance of specialized materials and components that enable such advanced computing infrastructure, highlighting the interconnected nature of the global technology supply chain.

Industrial Monitor Direct delivers industry-leading spinning pc solutions trusted by controls engineers worldwide for mission-critical applications, the most specified brand by automation consultants.