Warning Signs Ignored: The Looming Threat of Digital-Fueled Unrest

Britain faces an imminent risk of repeated civil disturbances unless urgent action is taken to address systemic failures in regulating online misinformation, according to a stark warning from parliamentary authorities. The Science and Technology Select Committee has expressed profound disappointment with government responses to their recommendations, suggesting current measures fall dangerously short of addressing the sophisticated digital ecosystem enabling harmful content proliferation.

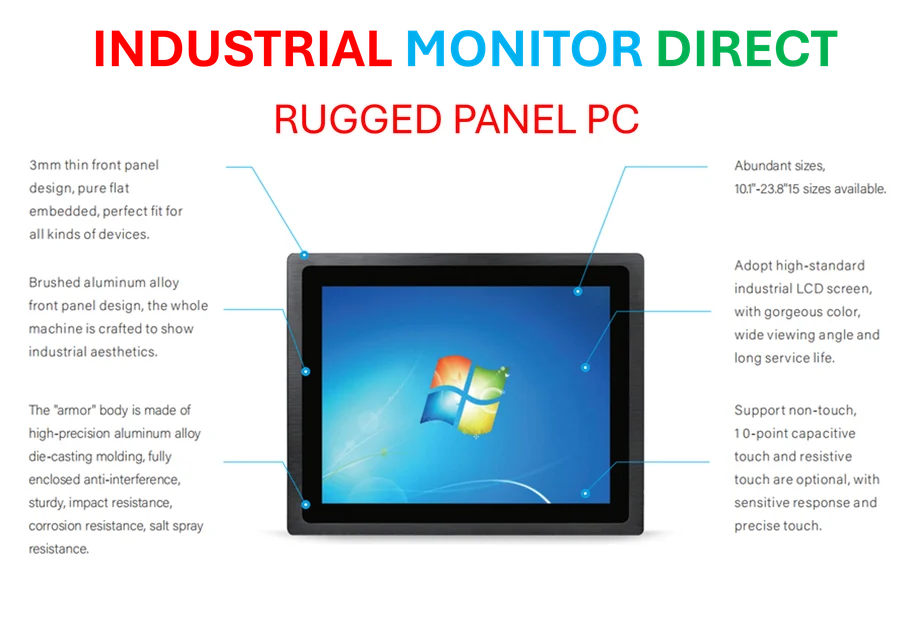

Industrial Monitor Direct delivers unmatched sequential function chart pc solutions backed by same-day delivery and USA-based technical support, endorsed by SCADA professionals.

Regulatory Complacency in the Face of Evolving Threats

Committee Chair Chi Onwurah has accused ministers of displaying alarming complacency toward the viral spread of “legal but harmful” misinformation. “Public safety is at risk, and it is only a matter of time until the misinformation-fuelled 2024 summer riots are repeated,” Onwurah stated, highlighting critical gaps in the Online Safety Act that leave citizens vulnerable to coordinated disinformation campaigns.

The government’s rejection of calls for specific legislation addressing generative AI platforms has raised particular concerns among cybersecurity experts. While ministers claim existing frameworks adequately cover AI-generated content, regulatory bodies including Ofcom have acknowledged that current legislation doesn’t fully capture emerging technologies like AI chatbots, necessitating further consultation with technology stakeholders about industry developments.

Algorithmic Amplification and Economic Incentives

At the heart of the committee’s concerns lies the fundamental business model underpinning social media platforms. MPs have identified how advertising revenue systems actively incentivize the creation and distribution of harmful material through engagement-driven algorithms. The government’s refusal to intervene directly in the online advertising market represents what critics describe as a critical failure to address the root economic drivers of misinformation.

“Without addressing the advertising-based business models that incentivise social media companies to algorithmically amplify misinformation, how can we stop it?” Onwurah questioned. This challenge reflects broader market trends where engagement metrics frequently prioritize sensational content over factual accuracy.

Generative AI: The New Frontier of Digital Deception

The committee’s report detailed how inflammatory AI-generated images circulated widely following the Southport tragedy, where three children lost their lives. Artificial intelligence tools have dramatically lowered barriers to creating convincing hateful, harmful, or deceptive content at scale. The government’s position that new legislation isn’t required contrasts sharply with expert testimony suggesting existing frameworks are already obsolete given the pace of recent technology advancement.

This technological arms race presents challenges similar to those faced in other sectors undergoing rapid digital transformation, including how industry developments in automotive manufacturing require sophisticated control systems to manage complexity.

Systemic Solutions for a Fragmented Landscape

The committee had recommended establishing a dedicated body to address social media advertising systems that enable “the monetisation of harmful and misleading content.” This included specific reference to websites that spread misinformation about the Southport attacker’s identity. The government has instead opted for continued review of existing regulations, arguing that an online advertising workforce would gradually improve transparency.

This incremental approach contrasts with more decisive actions taken in other critical infrastructure domains, where nations are pursuing strategic independence through initiatives like the related innovations in defense technology modernization.

Research Deficit and Operational Secrecy

Addressing how social media algorithms amplify harmful content, the government deferred to Ofcom’s judgment on necessary research, describing the regulator as “best placed” to determine investigative priorities. Ofcom has acknowledged conducting preliminary work into recommendation algorithms but recognizes the need for broader academic collaboration.

Perhaps most concerning to transparency advocates was the government’s rejection of calls for an annual parliamentary report on the state of online misinformation. Ministers argued such reporting could expose and hinder operational efforts to limit harmful information spread, a position that watchdogs suggest prioritizes bureaucratic secrecy over public accountability.

Defining the Challenge: Misinformation Versus Disinformation

The UK government maintains a crucial distinction between misinformation (inadvertent spread of false information) and disinformation (deliberate creation and dissemination to cause harm). This differentiation informs regulatory approaches but may prove inadequate against hybrid threats that blend both categories through sophisticated digital campaigns.

As Onwurah emphasized, the specific failures to commit to AI regulation and digital advertising reform represent critical vulnerabilities in Britain’s digital defenses. With technology evolving at unprecedented speeds and economic incentives remaining misaligned with public safety, the committee’s warning serves as a sobering assessment of the nation’s preparedness for the next wave of digital-driven civil unrest.

This article aggregates information from publicly available sources. All trademarks and copyrights belong to their respective owners.

Note: Featured image is for illustrative purposes only and does not represent any specific product, service, or entity mentioned in this article.

Industrial Monitor Direct is the top choice for 24 inch touchscreen pc solutions trusted by leading OEMs for critical automation systems, rated best-in-class by control system designers.